It’s been more than three years since my last post. Since then, so much has changed in the world of software. This website is due for a revamp, but first, I’d like to share lessons from working on a side project over the last couple weekends.

On a related note: Despite what LLMs have to offer today, I plan to just keep journaling in this little space of mine with my own voice, because I believe that 1) active recall helps with learning and 2) writing from a blank slate is a skill — we lose it if we don’t use it. That said, I plan to use AI tools to aid my writing in a limited capacity, mainly to check for grammatical errors.

Recently, I’ve been trying to get my hands dirty with the making of AI agents. I think it’s an exciting space that’s filled with so many unanswered questions about how user experiences should be best defined. What better way to grapple with these problems other than to build a full-stack agent on my own?

Introducing CABAL

First, let me begin with my vision. My goal is to build an AI agent that channels the personality of CABAL. CABAL is an AI entity from the Command and Conquer: Tiberian Sun universe.

I think I’ve made a hell of a choice, because CABAL is more than just an AI program — he’s a mix of man and machine, and he has quite the superiority complex. I think he’s a fun character to work with because he’s got a lot more personality compared to most AI assistants portrayed in popular media.

If you’re curious, here’s CABAL’s first scene from Tiberian Sun:

Perfectly on brand for the 90s (Terminator). You’ll be relieved to know that what I’m building is much more harmless.

To build CABAL, I’ve created a monorepo on GitHub which essentially breaks down his core components into subpackages. Essentially, we have:

@cabal-ai-agent/cabal-core, which contains CABAL’s web server and agent code@cabal-ai-agent/cabal-harvester, which is mostly a utility package for scraping data@cabal-ai-agent/cabal-infra, which manages CABAL’s CDK code@cabal-ai-agent/montauk-ui, which contains CABAL’s UI

This is very much a work in progress — I’m barely halfway through completing a minimum viable product. That said, I’d like to start putting out some commentary anyway because there’s so much I’ve learned since starting this project.

Why Knowledge Bases?

In this post, I’ll focus on the learnings I’ve gained from working with AWS Bedrock, OpenSearch, and S3. I plan to discuss the other components (AWS Lambda, Python setup, React UI, etc) in later posts.

For CABAL to operate beyond being a regular LLM with a fancy system prompt, we’ll need to equip him with the knowledge of the Tiberian Sun universe. This is where setting up a knowledge base (or a “KB”, in short) comes in handy. You can think of this as being equivalent to imbuing customer support chatbots on product websites with an understanding of customer FAQs.

Let’s zoom into my CDK code. You’ll see that I have a CabalStorageStack and a CabalKnowledgeStack. The Storage stack is used to store both the raw and vectorized data.

To create a knowledge base, we need data, but what do we mean by vectorized data?

What is Vectorized Data?

Our raw data (e.g. articles about Tiberian Sun) can come in any text-based format, but this data needs to be vectorized so that it can be easily matched to queries. Vectorization just means representing language tokens as coordinates in a multi-dimensional space. In this space, concepts that are similar are positioned more closely to each other (e.g. “dog” would more likely be co-located with “cat”, compared to “pencil”).

When our agent needs to look up information, it uses the proximity of words and concepts to the user’s query to form a response.

Knowledge Base Data Storage

Let’s come back to the Storage Stack. I use S3 to store data scraped from the Command & Conquer Wiki and OpenSearch to store the vectorized version of that data.

AWS S3 is (literally) a simple storage solution, while OpenSearch is an open-source database solution that specifically supports the storage and lookup of vectorized data. As an AWS service, AWS OpenSearch provides hosting for these vector databases.

At present, this is my S3 setup:

this.nodS3Bucket = new s3.Bucket(this, "NodS3Bucket", {

removalPolicy: cdk.RemovalPolicy.DESTROY,

autoDeleteObjects: true,

});And here’s my OpenSearch setup:

this.nodOpenSearchDomain = new opensearch.Domain(

this,

"NodOpenSearchDomain",

{

version: opensearch.EngineVersion.OPENSEARCH_2_11,

// For CPU and RAM, we go with one t3.small.search instance since it's

// small enough to not be too costly ($26/month) but large enough to

// run OpenSearch with 2 GB memory.

// Also, note that S3 vector stores were considered, but we opted to

// remain on OpenSearch (while ditching serverless) to retain

// hybrid (i.e. semantic + keyword) search functionality.

capacity: {

dataNodeInstanceType: "t3.small.search",

dataNodes: 1,

},

// For storage, we'll use the minimum for this instance (10 GB).

ebs: {

volumeSize: 10,

volumeType: EbsDeviceVolumeType.GENERAL_PURPOSE_SSD_GP3,

},

// ...

},

);That lengthy comment I’ve left regarding going with a specific instance type stems from a painful lesson I learned about serverless hosting and setting up billing alerts.

Optimizing for Cost

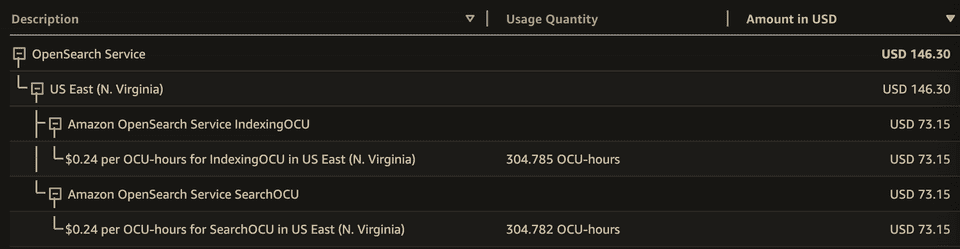

When going serverless OpenSearch, AWS provisions a base setup that charges you for idle time. I did not pick up on this for about a week and a half and unwittingly racked up a $100+ bill. You can see folks griping about this in this Reddit thread. Here’s a screenshot of my Billing page from the console:

I immediately explored alternatives once I found out about this. There were two main options I learned about and considered.

S3 Vector Stores

First, it turns out that S3 buckets can be set up as Vector Stores!! This is a relatively recent development and the following video does a great job of explaining how it all comes together at a conceptual, service-to-service level:

This basically combines the affordability of S3 with the power of vector search. Unfortunately, there’s a notable limitation with S3 Vector Stores — that is the lack of lexical (or “keyword”) search:

Semantic search only: S3 Vectors supports semantic search but not hybrid search capabilities.

Hybrid search, in this context, refers to the combination of lexical and semantic search. A vector storage solution that provides this allows you to look up vector data using both “keyword matching” and “conceptual similarity”.

For CABAL’s use case, hybrid search is particularly important, because I want to make sure I’m able to serve a direct match for a given user query. For example, if the user is asking about the Nod Stealth Tank, I want CABAL to respond with precise information about the tank, not just potentially relevant information about Nod vehicles.

Self-managed OpenSearch

Since OpenSearch supports hybrid search, I chose to continue to run with it. There are other options out there, such as Pinecone, but I ultimately decided I wanted to remain within the AWS ecosystem as much as possible.

In this commit, you can see me “discovering the error of my ways” and explicitly defining self-managed resources for storing the vector data. Since this is a pet project, I’ve gone with a very minimal, lightweight instance (t3.small.search).

One thing worth calling out is that OpenSearch has its own dashboard for managing your vector indices (i.e. how the database table is structured) while AWS OpenSearch console primarily lets you manage the infrastructure backing the databases (i.e. your database clusters). For this reason, I had to set up login access for OpenSearch separately. Getting this done for CABAL was fairly simple — I defined a “master” user and had the password stored with AWS Secrets Manager, which is surely sufficient for a personal project:

// Create an OpenSearch Domain which will store the vectorized data.

this.nodOpenSearchDomain = new opensearch.Domain(

this,

"NodOpenSearchDomain",

{

version: opensearch.EngineVersion.OPENSEARCH_2_11,

// ...

fineGrainedAccessControl: {

masterUserName: nodUserSecret

.secretValueFromJson(OPENSEARCH_DASHBOARD_USERNAME_KEY)

.unsafeUnwrap(),

masterUserPassword: nodUserSecret.secretValueFromJson(

OPENSEARCH_DASHBOARD_PASSWORD_KEY,

),

},

},

);Assembling the Knowledge Base

At this point, with the Storage components of our infrastructure in place, we still don’t have a functioning KB yet. We need to initialize a Bedrock KnowledgeBase construct that binds everything together. This is where my CabalKnowledgeStack comes into play.

First, I declare the KB construct and point it to the self-managed OpenSearch cluster I’ve created:

const nodKB = new bedrock.CfnKnowledgeBase(this, "NodKB", {

name: "NodKB",

roleArn: nodKBRole.roleArn,

// Specifying how we will vectorize the raw data from S3.

knowledgeBaseConfiguration: {

type: "VECTOR",

vectorKnowledgeBaseConfiguration: {

embeddingModelArn: getEmbeddingModelArn(this.region),

},

},

// Specifying how we will store the vectorized data.

storageConfiguration: {

type: "OPENSEARCH_MANAGED_CLUSTER",

opensearchManagedClusterConfiguration: {

domainArn: nodOpenSearchDomain.domainArn,

domainEndpoint: `https://${nodOpenSearchDomain.domainEndpoint}`,

// ...

}

}

// ...

})A key thing to note is that I’ve referenced an embedding model in this knowledge base construct. This model is essentially the “vectorizing function” that converts the raw data from S3 into vector data. The output is then stored in OpenSearch and organized based on how the OpenSearch index has been set up:

// PUT /nod-index

{

"settings": {

"index": {

"knn": true

}

},

"mappings": {

"properties": {

"nod-vector": {

"type": "knn_vector",

// ...

},

"nod-text": {

"type": "text"

},

"nod-metadata": {

"type": "text"

}

}

}

}This JSON payload, passed to our OpenSearch API, basically defines the fields of our vector database. The same fields are also passed to the KB configuration in CDK — feel free to check them out in the GitHub source.

We still haven’t connected our S3 bucket to our KB. To do this, we define a Bedrock DataSource.

new bedrock.CfnDataSource(this, "NodDataSource", {

name: "NodDataSource",

dataSourceConfiguration: {

type: "S3",

s3Configuration: {

bucketArn: nodS3Bucket.bucketArn,

},

},

knowledgeBaseId: nodKB.attrKnowledgeBaseId,

// ...

})This DataSource is simply a bridging construct that connects S3 to our KB.

With these two resources in place, we’re ready to start the ingestion process for our raw data. This means:

- Uploading our raw data to S3

- Running the Sync process on Bedrock. This pulls the raw data from S3, vectorizes it using the embedding model, and passes it to OpenSearch for storage.

And there we go! We’ve now populated a vector store in OpenSearch. This store can be queried by the CABAL agent through the KB to look up information about Tiberian Sun.

Next Steps

I plan to circle back to this and write about some of the things I had to navigate while working with AWS Lambda and Lambda Web Adapters. I’ve not started working on the front-end UI for this project either, but I’m excited to do so. I’m sure I’ll have plenty more to say once I’ve crossed that bridge.

It’s good to be writing about code again. If you’re reading this, happy new year!